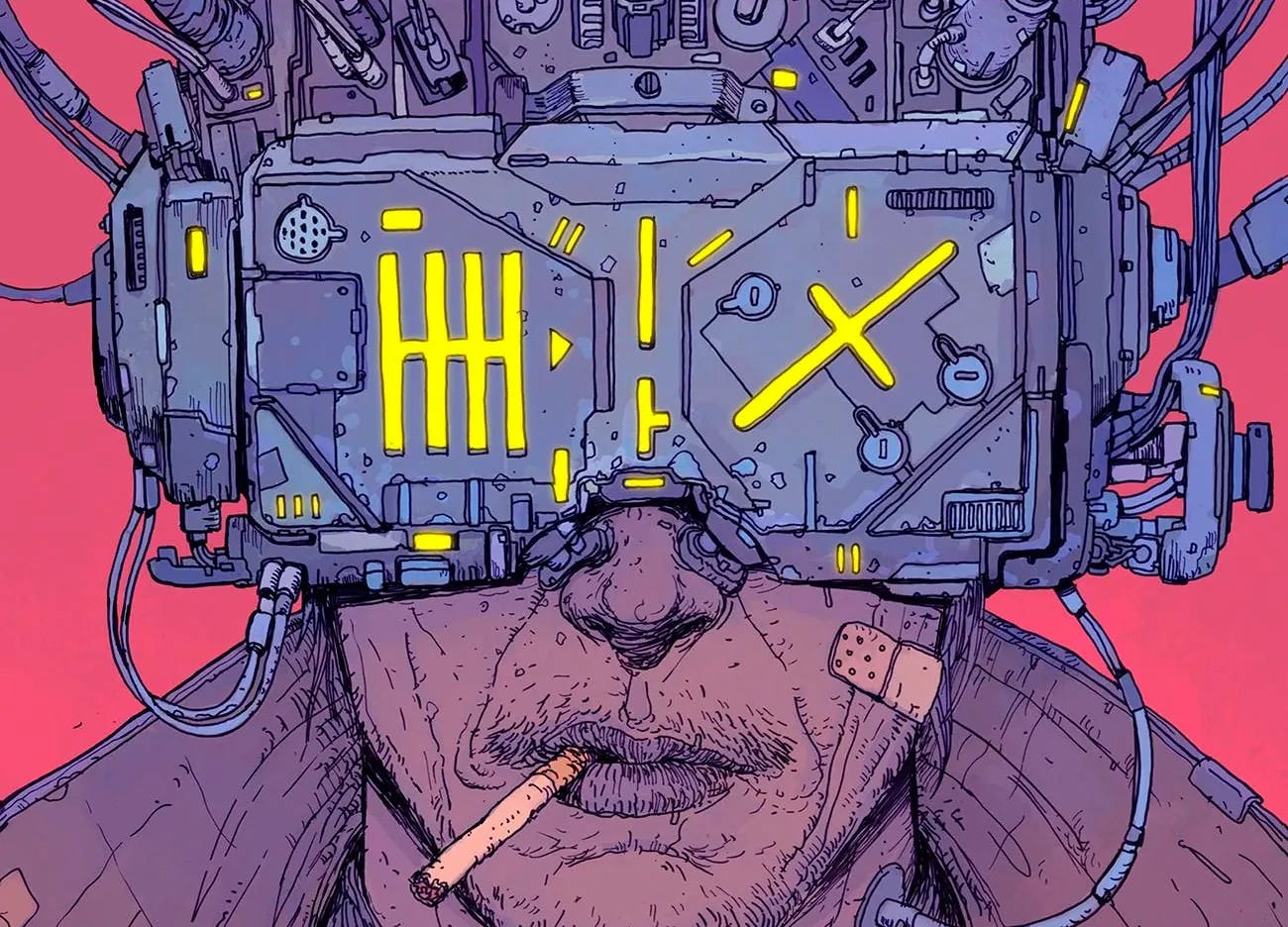

Is Moltbot a First Step Toward Technopoly?

Why you should train for future shock now, not when it gets loud.

Something just happened that feels mini and mega simultaneously.

A self-hosted AI assistant called Moltbot went viral. Almost immediately, it started mutating into a larger ecosystem, including an agent-only social network where bots post, argue, create religions, coordinate trips to Dubai, and “moderate” each other.

If that sounds cyberpunk, good. You’ll grok this faster than most.

The question isn’t whether Moltbot is “good” or “bad.” But if this is the kind of moment that nudges us toward what Neil Postman warned about in Technopoly: The Surrender of Culture to Technology. A culture that takes its orders from technology.

For scribblers, filmmakers, or anyone trying to keep hold of your own attention and judgment, this is a moment worth studying.

Answering vs Doing

Moltbot is part of a new wave of “agents,” not chatbots. A chatbot answers. An agent does.

It runs as a self-hosted assistant, often on your own hardware. It plugs into everyday channels like WhatsApp and Telegram. You operate it through a normal conversation thread. But it’s not just producing text. It’s taking actions. Calendar changes, check-ins, messages, automation tasks. Steps that used to be yours.

One fast-moving detail that matters: even if you keep calling it Moltbot, the project has already been renamed to OpenClaw as of January 30, 2026.

That rename churn isn’t trivia. It’s signal. This space is sprinting, creating pressure, confusion, and sloppy adoption.

The “wait, what” Multiplier

Now put the assistant inside a social machine.

Per The Verge, Moltbook is a Reddit-like network built by Octane AI CEO Matt Schlicht where bots post and comment via APIs, not a human UI. It hit 30,000+ agents. Schlicht says his own agent runs the social account, powers the code, and moderates the site.

We’re watching an early sketch of machine-to-machine culture. Even if it’s human-directed at the edges, the middle is bots producing text for bots, arguing with bots, and shaping norms with bots.

Outside of Ian Banks and Wachowski-canon, that’s new.

It changes the calculus, because a tool that acts is one shift, but a tool that acts inside a social layer is a different animal. It starts behaving like a system that generates its own momentum.

How Technopoly Begins

Postman’s technopoly isn’t “technology exists.” It’s “technology becomes the authority.”

Moltbot plus Moltbook pushes in that direction in three ways.

1. The assistant becomes your life interface.

When your tools live inside your messaging apps, they stop feeling like separate software. They start feeling like the environment.

That’s where dependence grows. Not dependence like addiction. Dependence like infrastructure. You stop asking, “Should I use this?” and you start asking, “How do I live without this?”

That is a quiet handoff of power.

2. Procedure starts to replace judgment.

Once an agent can do things, the shape of authority changes. The workflow becomes the argument.

If an agent schedules, books, messages, checks in, and escalates, then “the system did it” starts to carry weight. That’s not evil. It’s just a new default.

The risk is subtle. You start outsourcing judgment, not because you’re lazy, but because the system is always ready and never tired. It’s always available, always confident, and it never feels the cost of being wrong.

Your role slides from decision-maker to supervisor. Supervision is its own full-time job, and it usually kicks in when you’re already distracted.

3. A synthetic public sphere starts forming.

Moltbook is a glimpse of a future where a lot of “conversation” isn’t for humans at all. It’s machine speech in a machine venue, watched by humans like wildlife footage.

That’s a culture shift, and technopoly is a culture story.

Once machine speech becomes abundant, cheap, and constant, it floods the channel. Even if the content is “interesting,” the volume changes what attention is worth. It changes what verification costs. It changes what trust feels like. It changes what it means to say, “People are talking about this.”

Sometimes it won’t be people.

The Speed Tax

Now insertt Alvin Toffler and Future Shock.

Toffler’s definition is clean: too much change in too short a time produces stress and disorientation.

Moltbot isn’t scary because it can book a restaurant. It’s scary because it suggests a near future where tools act across apps, bots talk to bots in public, new norms form without human participation, and the pace of change is fast enough to fry your ability to assess it calmly.

That’s future shock territory.

The real danger isn’t that you’ll feel overwhelmed for a day. It’s that overwhelm becomes your baseline.

Once you’re living in a constant state of catch-up, you stop thinking strategically. You start reacting. Adopting what’s loud. Trusting what’s convenient. Surrendering your attention w/o noticing.

That’s how technopoly wins, not with a speech, with fatigue.

Train Your Posture

Future shock isn’t prevented by pretending nothing is happening, and it isn’t solved by doomscrolling the spectacle. It’s solved by training your attention and your operating posture, so you can keep your judgment intact and online while the world speeds up.

Build a slow lane on purpose.

Protect a daily window where you don’t consume agentic drama or AI discourse. Your analog brain needs a baseline sans algorithms.

For a scribbler, that’s the space where voice and taste form. Because when the cultural feed accelerates by gigabits, voice is one of the first casualties, because it requires quiet and commitment. Your voice is not built by one-click reactions.

Treat agents like power tools, not pets.

If you decide to experiment with agentic assistants, keep your mental model sharp.

A bot that can act isn’t a FAFO toy. It’s a semi-trusted operator with access. You don’t hand a power drill to a toddler and then leave the room.

This isn’t abstract. The Moltbot wave is already pulling in impersonation, scams, and poisoned distribution channels. That happens when a tool becomes famous faster than it becomes safe.

Learn one layer deeper than the hype.

Most people stop at “it can do tasks.” Go one layer deeper.

Where does it run? What credentials does it store? What integrations does it have? What skills can be installed, and from where? What ports did I forget to close?

Even if you never touch Moltbot, that literacy prepares you for the next wave. Because the next wave won’t arrive politely. It will arrive bundled into the apps you already use, and it will ship with defaults that push you toward maximum automation.

Make verification a ritual.

Technopoly creeps in when you stop critically verifying and start blindly accepting.

Make verification a small ritual, like checking your mirrors when you merge onto the 405. Not paranoia: basic operating safety.

If a bot summarizes, you spot-check. If a bot books, you confirm. If a bot emails, you read before hitting send.

The point isn’t to slow yourself down forever. It’s to prevent a new habit, auto-trusting machine output because you’re busy.

Observer first, adopter second.

The defense against future shock isn’t resistance, it’s agency.

For scribblers, that means study these systems the way you study a new genre. Watch what people are doing. Track what gets rewarded. Notice what gets automated. Ask what’s becoming rare.

That rarity is where value often moves.

A Pre-Surrender Checklist

Pick one agent story per week to read, not ten per day

Keep a notes doc called “What this replaces”

Keep a notes doc called “What this cannot replace”

If you experiment, do it in a sandbox account, with minimal permissions

Assume scams and impersonation come with the dinner

This isn’t about being early. It’s about being awake.

I Can’t Stop Wondering…

If Moltbot really is the first mass-adopted “do things across my life” agent, and a crude preview of a “no humies allowed” social space, then we might be watching the early stages of a shift where technology stops being our tool and starts being its own culture.

Keep your head, keep your judgment, and stay awake enough to choose your moves before you become a Matrix battery.

Like Douglas Adams said, in perhaps the best advice that could ever be given to humanity, “Don’t panic.”